Find out where to watch Black Panther: Wakanda Forever, the MCU superhero sequel with Letitia Wright, Winston Duke, and Oscar nominee Angela Bassett.

from Digital Trends https://ift.tt/IiGo2BD

from Digital Trends https://ift.tt/IiGo2BD

Top Tech Blast is a Technology blog with all the latest news related to Technology. Subscribe to Top Tech Blast and Stay updated with the latest happenings in the World of Technology.

Open-source password manager KeePass has refuted claims that it has a major security flaw allowing for undue access to user password vaults.

KeePass is designed primarily for individual use, rather than being a business password manager. It differs from many popular password managers in that it doesn't store its database in cloud servers; instead, it stores them locally on the user's device.

The newly discovered vulnerability, known as CVE-2023-24055, allows hackers who have already gained access to a user's system to export their entire vault in plain text by altering an XML configuration file, completely exposing all their usernames and passwords.

When the victim opens KeePass and enters their master password to access their vault, this will trigger the export of the database to a file that the hackers can steal. The process quietly goes about its business in the background, without notifying KeePass or your operating system, so there is no verification or authentication required, leaving the victim non the wiser.

Users on a Sourceforge forum have asked KeePass to implement the requirement of their master password to be inputted before the export is allowed to happen, or to disable the export feature by default and requiring the master password to reenable it.

A workable exploit of this vulnerability has already been shared online, so it is only a matter time before it is developed further by malware developers and made widespread.

While not denying the existence of the CVE-2023-24055 vulnerability, KeePass's argument is that it cannot protect against threat actors who already have control of your system. They said that threat actors with write access to a user's system could steal their password vault via all sorts of means which it could not prevent.

It was described as a 'write access to the configuration file' issue back in April 2019, with KeePass claiming that it is not a vulnerability pertaining to the password manager itself.

The developers said that "Having write access to the KeePass configuration file typically implies that an attacker can actually perform much more powerful attacks than modifying the configuration file (and these attacks in the end can also affect KeePass, independent of a configuration file protection)".

"These attacks can only be prevented by keeping the environment secure (by using an anti-virus software, a firewall, not opening unknown e-mail attachments, etc.). KeePass cannot magically run securely in an insecure environment", they added.

While KeePass are not willing to add any additional protections to prevent unauthorized export of the XML file, there is a workaround users can try. If they login as a user administrator instead, then they can create an enforced configuration file, which prevents the triggering of the export. They first have to make sure that no one else has write access to KeePass files and directories before they activate the admin account.

However, even this is not foolproof, since attackers could run an copy of the KeePass executable in another directory separate to where the enforced config file is stored, which means that, according to KeePass, "this copy does not know the enforced configuration file that is stored elsewhere, [therefore] no settings are enforced."

We're fast approaching Samsung's first Galaxy Unpacked Event of 2023 - and that means we're mere hours away from the probable unveiling of the Samsung Galaxy S23 series.

Yes, after months of rumors and speculation, we'll finally be getting eyes on the company's trio of new flagship phones, alongside a bevvy of new laptops (if the leaks hold true).

The Samsung Unpacked event starts at 10am PT / 1pm ET / 6pm GMT tomorrow (February 1), and we'll be with you every step of the way. Samsung will be streaming the whole thing online, and we've embedded the placeholder for that below. We also have a guide explaining How to watch the Samsung Galaxy S23 launch online live.

But you don't even need to do that, because we'll be at the event ourselves and will be reporting back as Samsung lifts the lid on its latest flagships. So scroll down for more details about what to expect, then keep this page bookmarked for all the last-minute rumors before the event, then all the news once it starts.

Samsung Galaxy S23: The S23 looks like a relatively minor upgrade on the Samsung Galaxy S22, with the same 6.1-inch FHD+ screen, the same 120Hz refresh rate, and the same rear camera setup. But a new chipset - most likely the Qualcomm Snapdragon 8 Gen 2 - looks a cert, and the design should be brought more in line with the S23 Ultra.

Samsung Galaxy S23 Plus: As with the S23, the Galaxy S23 Plus is likely to be an evolution rather than revolution. Expect a bigger 6.6-inch FHD+ screen and a larger battery than on the vanilla model, but not many other differences.

Samsung Galaxy S23 Ultra: The standout reveal at Galaxy Unpacked should be the Samsung Galaxy S23 Ultra. As well as getting a powerful new chipset it's tipped to get a whopping 200MP sensor on the rear camera. Elsewhere, a 6.8-inch QHD+ screen, up to 12GB of RAM, up to 1TB of storage, and a 5,000mAh battery should give it the specs to compete with the best phones.

Samsung Galaxy Book 3 family: Rumors suggest that there will be several Galaxy Book 3 models debuting at Unpacked, including the Samsung Galaxy Book 3 Pro, the Galaxy Book 3 Pro 360, and the Galaxy Book 3 Ultra.

One UI 5.1: The only software reveal at the event is likely to be the latest version of Samsung's One UI. This is unlikely to be a huge release, with bigger changes likely held back for the arrival of Android 14 later this year.

Good afternoon and welcome to our Samsung Galaxy S23 event live blog.

We're just under 24 hours out from Samsung Galaxy Unpacked, which is set to start at 10am PT / 1pm ET / 6pm GMT on February 1 (or 5am AEDT on February 2).

We'll be keeping a close eye on any breaking news ahead of the event, as well as giving you our verdict on the rumors so far. Then, once the event begins, we'll be sharing all the big news as it happens.

So, on with the show…

Intel appears to have quietly killed off its open source RISC-V developer environment, Pathfinder.

The news may come as a shock to many SoC architects, software developers, and product research teams, primarily because Pathfinder was only announced in August 2022, however to others, it may have been an expected move.

The company reported a catastrophic end to 2022, with its Q4 alone accounting for $661 million in losses, and has pulled the plug on a number of its other operations. Besides this, 544 of its California-based workers are at risk of redundancy, with the potential for more layoffs globally as the company gears up to what it calls a “meaningful number” of job cuts.

The 2022 press release unveiling Pathfinder details the number of RISC-V-focused initiatives that have rolled out over the years, indicating Intel’s commitment, however just months later, users began to report that it had been cut.

Intel has since updated its website with a statement that reads:

“We regret to inform you that Intel is discontinuing the Intel Pathfinder for RISC-V program effective immediately.”

The web page directs users to “promptly transition” to alternative RISC-V software tools, highlighting that bug fixes have also been stopped.

The program was designed to help its users develop RISC-V chips using industry-standard toolchains and as such had been supported by a number of RISC-V companies. It was split into a Professional Edition, and a more stripped back Starter Edition for hobbyists looking to give it a go.

Vijay Krishnan remained general manager for RISC-V ventures at the company for over a year and a half until it shut its doors this month, pushing him into a new role as general manager for new initiatives, indicating that Intel is turning its back on its RISC-V operations for now.

TechRadar Pro has asked Intel to confirm its decision to stop the Pathfinder program and whether it plans to continue investing in RISC-V in the future.

Marshall is a brand synonymous with road-worthy guitar amps, but this new portable Bluetooth speaker seems rugged enough to stand up to a world tour with Slash.

The Middleton certainly looks the part, featuring the company’s script logo on a black plastic housing. Made from 55% post-consumer recycled plastic, the casing has a IP67 rating, meaning it offers complete protection from dust as well as being able to stand up to being submerged in 1m water for at least 30 minutes.

The Middleton sits between Marshall’s recently released Stockwell 2 and Emberton 2, and boasts a multi-directional quad-speaker set-up to take on the best Bluetooth speakers when it comes to audio power from a compact body.

As with the Marshall Emberton 2, this four-speaker array allows the device to utilize Marshall’s bespoke 'True Stereophonic' system, which the company claims creates a more immersive 360-degree sound stage than a standard stereo setup.

There’s a hefty maximum output of 87db, which sounds like it would be enough to get Lemmy’s approval from beyond the grave, but if that isn’t enough, you can use the device’s multi-speaker Stack Mode and pair it up with other Middletons for even more power.

Unlike the Emberton 2, there are physical bass and treble controls on the top of the speaker, while EQ can also be adjusted via Marshall’s dedicated app for Apple and Android devices.

The Middleton comes with a claimed battery life in excess of 20 hours playtime, with a full charge taking 4.5 hours to bring the juice back up to 100%. Usefully, the battery also acts as a power bank, allowing you to charge your mobile devices on the move.

The Marshall Middleton is available now for £269/€299/$299 direct from marshallheadphones.com.

With its lengthy battery, cool looks and balanced sound, we were impressed with the performance of Marshall’s updated Emberton 2 speaker when it arrived late last year, but questioned its elevated price tag.

The Middleton brings bigger output, IP67 waterproofing and some welcome EQ controls, but there’s still some notable features missing we’d expect at this price.

Chief of these (for some) is the lack of support for smart assistants, with no built-in means of using the likes of Alexa or Google Assistant, which may make the Middleton a hard sell when lined up against others on our best waterproof speakers list, including the cheaper Sonos Roam.

But audio is the most important thing, and if it sounds right, we'll forgive the lack of tiny rock fairy to talk to.

Russian internet giant Yandex has denied it suffered a cyberattack after some of its internal source code was posted online.

The leaker posted 44.7GB worth of files, which they say are "Yandex git sources", as Torrent on a well-known hacker forum, with much of the company's source code believed to be included.

The files are thought to date back to February 2022, and although the leak does contain some API keys, these are only thought to have been used for testing deployment.

BleepingComputer reports that an initial analysis of the files by software engineer Arseniy Shestakov noted that technical data and code for many of Yandex's top products appeared to be included.

Mail, Disk and Yandex Pay - the company's email, cloud storage and payment processing services respectively - were among the platforms affected. Oddly enough, though, its anti-spam rules were not.

Yandex denied that its systems had been hacked, instead blaming a former employee for leaking the source code repository.

"Yandex was not hacked. Our security service found code fragments from an internal repository in the public domain, but the content differs from the current version of the repository used in Yandex services," the company told BleepingComputer in a statement.

"We are conducting an internal investigation into the reasons for the release of source code fragments to the public, but we do not see any threat to user data or platform performance."

The news comes shortly after the UK's National Cyber Security Centre (NCSC) issued a warning over the continual cyberattacks perpetrated by Russian and Iranian hacker groups.

Although the two groups do not appear in be in collusion, they are separately attacking the same types of organizations, which last year included government bodies, NGOs, and those in the defense and education sectors, as well as individuals such politicians, journalists and activists.

Via: BleepingComputer

New data has named Kinsta, Liquid Web, and WP Engine as the most reliable WordPress hosting providers based on a study that analyzed historical data on all downtime events for each service.

StatusGator, the data monitoring tool found that on average, WordPress hosting providers have 11 hours and six minutes of downtime per year.

This comes as WordPress, the open-source content management system (CMS), prepares to celebrate its 20th anniversary this year.

WordPress was released on May 27, 2003, by its founders, Matt Mullenweg and Mike Little, as a fork of b2/cafelog.

In a tweet posted on January 25, 2023, WordPress co-founder, Mike Little said: “20 years ago today, I commented on Matt's blog & kicked off the project that became WordPress. Now WordPress powers over 43% of most popular domains. It is made by a worldwide community of thousands of contributors & millions of users.”

Matt Mullenweg says he was so lucky that Mike had left that comment, describing that moment in 2003 as “the butterfly effect”.

The official anniversary date for WordPress’ launch is May 27, 2023, and the platform is planning a number of events during the first of the year to celebrate.

In the study looking into WordPress services, StatusGator listed Flywheel, Cloudways, Hostinger and DreamHost in the top seven most reliable WordPress hosts.

Based on the results, Liquid Web has the fewest hosting-related downtimes at 0.6 per year, while Hostinger has the most at 36.0 per year, and some providers, such as GoDaddy, Anchor Host, and HostPress, were found to not make their downtimes public.

In order to produce the findings, StatusGator divided certain downtime events into critical and non critical sections with shared hosting, VPS, data center, dedicated servers and DNS falling into the critical hosting-related downtime category.

The non critical downtime observed include email, help centers and live chat support, cPanel, as well as billing and subscription services.

The default file system for Windows 11 may soon be changing to a new offering designed with high-end servers in mind, but there’s still a long way to go yet.

For more than three decades, Windows machines have used NTFS for all things storage, including internal drives as well as external drives such as USB sticks.

However release notes for the latest build of Windows 11 (version 25276), detail support for the Resilient File System (ReFS).

ReFS was first introduced with Windows Server 2012, and it’s clearly designed with large amounts of data in mind. Windows Latest notes that NTFS is limited to 256 terabytes (which frankly is more than enough for you or I), but there are some instances where businesses and data centers may need more than this. ReFS raises the limit to 35 petabytes (over 35,000 terabytes).

The Resilient FS promises to be more resilient in that it can detect and repair corruptions while remaining online, and it’s also designed with scalability in mind.

“ReFS is designed to support extremely large data sets - millions of terabytes - without negatively impacting performance, achieving greater scale than prior file systems," Microsoft noted.

There are some drawbacks, though, especially when it comes to using ReFS for the computers that consumers may end up using. For now, at least, it’s unable to support system compression, encryption, and removable media.

While it could be years before ReFS comes to our home (if at all), its support in Windows 11 may indicated it trickling down into some high-end business machines as it expands outside the realms of servers, but right now, NTFS has nothing to worry about.

Via Windows Latest

Virtualization giant VMware has released patches for four vulnerabilities in its vRealize Log Insight product, two of which have a “critical” severity rating.

The critical pair are CVE-2022-31703 and CVE-2022-31704. The former is a directory traversal vulnerability, while the latter is a broken access control vulnerability. Both were given a 9.8 severity score, and both allow threat actors to access resources that should otherwise be inaccessible.

"An unauthenticated, malicious actor can inject files into the operating system of an impacted appliance which can result in remote code execution," VMware explained.

The other two flaws are CVE-2022-31710 and CVE-2022-31711. The former is a deserialization vulnerability that allows threat actors to tamper with data and launch denial-of-service attacks. It’s been given a 7.5 severity score. The latter is a 5.3-scored information disclosure bug that can be leveraged to steal sensitive data.

To protect against the flaws, users are advised to apply the patch immediately, and bring their endpoints to version 8.10.2. Those that cannot apply the patch right now can also apply the workaround, for which the instructions can be found here.

The flaws were originally discovered by the Zero Day Initiative, the publication confirmed. The program’s members said that so far, there is no evidence of the flaws being abused in the wild.

"We're not aware of any public exploit code or active attacks using this vulnerability," Dustin Childs, head of threat awareness at Trend Micro's ZDI, told The Register. "While we have no current plans to publish proof of concept for this bug, our research in VMware and other virtualization technologies continues."

vRealize Log Insight is a log management tool. Although it’s not as popular as some of VMware’s other solutions, the company’s presence in both the public and private sectors most likely makes all of its products an attractive target for cybercriminals looking for vulnerabilities.

Via: The Register

As Russia’s military was bombarding Ukraine, back at home, Russian companies were being bombarded with Distributed Denial of Service (DDoS) attacks - with such incidents against Russian entities reaching new highs in 2022.

Figures from Rostelecom, Russia's biggest ISP, claim there were 21.5 million DDoS attacks carried out against some 600 organizations in the country in 2022.

Most of the attacks happened in and around Moscow, where the majority of these companies are headquartered. None of the bigger sectors seems to have been spared, with firms in telecom, retail, finance, and the public sector, all experiencing attacks.

The public sector was the most targeted, seeingalmost a third (30%) of all incidents (up 12x year-on-year). Financial institutions took up a quarter of all attacks (25%), followed by education (16%).

The biggest attack was 760 GB/sec, Rostelecom further said, claiming it was almost double in destructive power, compared to last year’s biggest attack. The longest attack, however, lasted almost three months.

Most of the attacks started in March, which coincides with the invasion of Ukraine, which started on February 24. The attacks culminated in May, the firm later said. Based on the IP addresses used, the company concluded that the origin of majority of the attacks was in the United States.

While DDoS attacks made up the vast majority of all attacks (roughly 80%), there were other types of cyberattacks, as well. Vulnerable websites were also on the radar of western hackers, which abused the flaws to launch arbitrary command execution attacks (10%), path traversal (4%), local file inclusion (3%), SQL injection (3%), and cross-site scripting (1%).

Since the war between Russia and Ukraine began, hackers and hacktivists from all sides have entered the fray, and have been quite active.

Among them was Conti, one of the biggest ransomware operators, which enraged its affiliates (mostly Ukrainians) after openly siding with the Russian government. Conti later backtracked on its statement but the damage had already been done, with one hacker deciding to leak multiple source code versions as well as hundreds of thousands of chat lines between its members.

Via: BleepingComputer

Google Optimize, formerly known as Google Website Optimizer, and Optimize 360 will no longer be available after September 30, 2023.

The freemium testing tool and web analytics company allows users to run experiments to help online marketers increase visitor conversion rates for websites.

Google says that for now, all experiments and personalizations will continue to run, but any that are still active after September 30, will cease to exist.

Google Optimize was launched over five years ago with the aim of helping businesses test and improve website user experience.

Google is now encouraging all users to download their historical data from within the Optimize user interface before September 30, 2023.

Additionally, users that want to extend or renew their Google Analytics 360 (Universal Analytics) contracts in the first half of 2023 will be able to renew their Optimize 360 contracts with service dates ending on or before September 30, 2023.

“Those who have signed Google Analytics 4 contracts will not be able to sign Optimize 360 contracts, but will have access to Optimize via the integration in Google Analytics 4 until the September 30, 2023 sunset date,” said Google in a blog post.

“Additionally, for anyone who has signed a Google Analytics 4 contract, but plans to continue to use Google Analytics 360 (Universal Analytics) as they transition over to Google Analytics 4, we will be providing access to Optimize 360 free of charge until the September 30, 2023 sunset date.”

Google also advised users to keep their Optimize containers linked to GA360 properties to do so.

Speaking on the reason behind the discontinuation of both services, Google says Optimize does not have many of the features and services that its customers request and need for experimentation A/B testing.

The company is, therefore, investing in a replacement solution that will better suit the needs of its customers.

WhatsApp is currently developing a way for users to send images in their original resolution without impacting quality.

WABetaInfo, which discovered the feature, reports users will be able to choose photo quality via a new Settings menu located in the app’s drawing tool. The current version of WhatsApp does allow you to choose “Best Quality” prior to sending images to keep the resolution high, but it still compresses files – just to a lesser extent in order to provide a fast data transfer time. But still, having that newfound level of control will be especially helpful in situations where the quality of a photo is important, as WABetaInfo points out. Not much else is known about the feature, but it’s probably safe to say sending images in their original resolution will most likely increase data transfer time, download time, and the amount of space needed on a device to store said files.

As stated earlier, the original image resolution feature is in development so it won’t be a part of any upcoming WhatsApp betas or launch anytime soon. It’s also worth pointing out that the update was discovered on the Android version of WhatsApp with no mention of whether or not the original image resolution feature will arrive for iOS.

WABetaInfo also uncovered new shortcuts for WhatsApp mobile. These shortcuts will allow group chat admins “to quickly and easily perform actions… [and] simplify some interactions with group members”. The full extent of this feature is unknown, but according to one example, admins can choose to highlight phone numbers whenever someone joins or leaves a group chat. Additionally, admins can create a new context menu for themselves for certain actions like privately calling chat participants or adding them to their contacts.

These shortcuts will be especially helpful when dealing with massive groups. Back in November 2022, WhatsApp launched Communities: large-scale chats that can house 1024 participants. With chats that big, admins need all the tools they can get to manage everything. This shortcut feature will definitely be a major boon for them.

And unlike the original image quality feature, the shortcuts are currently available for both Android and iOS through their respective WhatsApp betas. Unfortunately for iPhone owners, the TestFlight program for WhatsApp is no longer accepting newcomers. If you’re already a participant, you can just download the beta, no problem. Android users can still join the Google Play Store beta program, however.

2023 is slated to be a big year for WhatsApp. January alone has seen WABetaInfo reveal a ton of beta features for the messaging app like the ability to record statuses with your voice and a revamped chat transfer that removes Google Drive from the equation. Be sure to check out TechRadar’s recent WhatsApp beta coverage.

Samsung has patched two vulnerabilities in its mobile app marketplace that could have allowed threat actors to install any app on a target mobile device without the device owner’s knowledge or consent.

Cybersecurity researchers from the NCC Group discovered the vulnerabilities in late December 2022 and tipped Samsung off, with the company issuing a patch (version 4.5.49.8) on January 1 2023.

Now, almost a month after the flaw was addressed, the researchers published technical details and a proof-of-concept (PoC) exploit code.

The first flaw is tracked as CVE-2023-21433, an improper access control flaw that can be used to install apps on the target endpoint. The second flaw, tracked as CVE-2023-21434, is described as an improper input validation vulnerability, which can be used to execute malicious JavaScript on the targeted device.

While local access is required in the exploiting of both vulnerabilities, for skilled criminals that’s a non-issue, it was said. The researchers demonstrated the flaws by having the app install Pokemon Go, a globally popular geolocation game based on the world of Pokemon.

While Pokemon Go is a benign app, the flaws could have been used for more sinister goals, the researchers confirmed. In fact, threat actors could have used them to access sensitive information or crash mobile apps.

It also needs to be mentioned that Samsung devices running Android 13 are not vulnerable to the flaw, even if their device still carries an older, vulnerable version of the Galaxy Store.

This is due to additional security measures introduced in the latest version of the popular mobile OS.

However, according to figures from AppBrain, just 7% of all Android devices are sporting the latest version, while unsupported versions of Android (9.0 Pie and older) make up roughly 27% of the entire Android market share.

Via: BleepingComputer

You might have thought just about every aspect of the upcoming Samsung Galaxy S23 phones had leaked at this point, but not so – the rumor mill keeps coming up with more information about these flagship devices ahead of their February 1 launch.

Today we've got another tidbit of information from well-known provider of leaks Ice Universe (via GSMArena), who has taken to Chinese social media platform Weibo to give us some details of the portrait video mode on the Galaxy S23 Ultra.

The source says that the mode will be capable of shooting in a 4K resolution at 30 frames per second, with the phone offering relatively good thermal control so that the processing power required to capture clips in this mode doesn't overheat the phone.

We weren't hugely impressed with the portrait video mode on the Galaxy S22 Ultra, especially compared with cinematic mode on the iPhone. In both cases, the subject of a video is kept in focus while the background gets blurred.

The current Samsung Galaxy S22 Ultra can capture normal video at an 8K resolution at 24 frames per second, or at a 4K resolution at 60 frames per second. In portrait mode, that goes down to a 1080p resolution at 30 frames per second.

What's not clear is whether or not the other two Galaxy S23 models are going to get portrait mode this time around. All will be revealed when Samsung's next Unpacked launch event rolls around, and it's only a couple of weeks away.

Based on the rumors we've heard so far, we're expecting the Samsung Galaxy S23 Ultra to come fitted with the new 200MP ISOCELL HP2 sensor that Samsung has revealed. The standard and Plus models, meanwhile, are rumored to be sticking to a 50MP main sensor.

That should mean that the Ultra model is the one to look at for the most substantial camera upgrades over last year's models. So far we've heard that the night vision capabilities will be better, and we've seen sample shots for comparison purposes.

There has also been talk that Samsung is adding more modes on the software side, to go with improvements in the hardware. From a photo and video standpoint, you should be able to do more than ever with the upcoming Galaxy S23 handsets.

In fact there's been so much buzz around this that we think the Galaxy S23 Ultra could be one of the best photo-taking phones of the year – and it might even have more to offer than whatever Apple is plotting with the cameras on the iPhone 15.

Much of Avatar: The Way of Water’s rub-your-eyes-in-disbelief magic stems from its ability to convincingly blur the practical and the digital – and the animators at Wētā FX did such a good job in that department that director James Cameron was often duped into approving entirely computer-generated shots.

In an exclusive interview with TechRadar, Daniel Barrett, Senior Animation Supervisor at the New Zealand-based visual effects company, revealed that he and his team were sometimes forced to side-step Cameron’s desire to keep things as practical as possible in order to maintain the realism of certain shots.

“There’s a lot of interaction between [Na’vi] characters and Spider [played by Jack Champion] in The Way of Water,” Barrett explains, “and getting the kind of contact accuracy that you need in a stereoscopic film can be a real challenge. The planning on set was done to such a high level that many of those shots worked. But there were also times [when they didn’t].

“If you think of those shots where Quaritch is carrying Spider to the drop zone – that was all shot practically, but we realized pretty quickly that there were elements of Jack’s body that we needed to replace with a digital one to make sure we could get all of that contact done. Our digital doubles got to a really high level. We had plenty of situations on the film where we tricked Jim [Cameron] – where he thought we were practical, and we were in fact digital.

“We would make the decision: which is the path of least resistance to give Jim back his plate exactly as he shot it? And sometimes the savings were too great not to go digital [...] But obviously there was still a lot of work to be done there. For the camera team to create matchmoves that are accurate enough to hold up in 3D movies, there’s a real challenge in that. And they did amazing work on this film to reconcile some of those situations for us.”

As someone whose team was “largely responsible for everything that moves” in The Way of Water, Barrett is among the few people who can give an informed answer to the question: how the heck did Cameron pull this off?

If you’ve seen any of the film’s behind-the-scenes featurettes, you’ll know that the processes involved in bringing the entirely fictional world of Pandora to life on screen must have been mind-bogglingly complex. So, naturally, we asked Barrett to explain – in layman's terms – how Wētā turned the likes of Kate Winslet and Cliff Curtis into 10-foot, water-dwelling Na'vi.

“The way we broke it up,” he begins, “there were certain teams for certain sequences, but we also have specialist artists. So, for instance, we have a facial team, who did the lion's share of the facial work, and they sit as a separate department. We have a motion edit team whose starting point is the performance capture data – obviously, they did a huge amount of work on this film. Then we have the animation team, who do a little bit of everything – they’re responsible for all the creatures, vehicles and things like that. And we also have a crowds team, who deal with the bigger crowd [animations], whether it's fish or birds or Metkayina at a village. So all of those groups of people, in those departments from what we call the motion realm, totalled about 150 at our peak.

Your facial animation will fall over if you don’t have a very accurate version of the performance.

Daniel Barrett, Wētā FX

“So the motion capture is captured – the bulk of that was done at Lightstorm [studios] – and [the footage] is then selected by Jim, whatever he likes,” Barrett continues. “Then it’ll be turned over to Wētā, where it comes through the motion capture team. The data tracking is done at Lightstorm, but we like to re-track it to make sure we maintain all the fidelity and detail of the performances. That will then go through to the motion edit team, who begin work on the bodies – and there’s sometimes a bit of cleanup involved in that [stage]. The motion edit team – also sometimes the animation team – will deal with the bits and pieces that you don’t get to capture," Barrett explains, giving Na’vi fingers and tails as examples.

“We like the bodies to be pretty much done before we move to facials – and on that note, there’s a huge amount of attention paid to what the head is doing, because your facial animation will fall over if you don’t have a very accurate version of the performance. Once that’s done, we move to facial [animations] – although sometimes, if we realize we’ve missed something with the head, we have to push it back one step. And that’s pretty much the process for performance capture, [with regards] to the motion team.

“Obviously, beyond us,” Barrett adds, “there’s an awful lot of work done prior in terms of the models, the rigging of the characters, the shading and the textures. But once the motion is there, the footage goes through the creatures team, who simulate cloth, costume and hair. And then of course we’ve got a very clever lighting team who work their magic, which is always such a wonderful thing. To see these characters finally rendered… oh, it’s just such a thrill. To have been working on something that looks a little cartoony and then see something that looks like the real thing. It’s such a pleasure, such a gift.”

Judging by The Way of Water’s near-$2 billion global box office receipts, audiences are enjoying the pleasure, too.

Avatar: The Way of Water is now playing in theaters worldwide.

Cumulus Data has announced a new data center in northeast Pennsylvania powered by nuclear energy.

Data centers have typically required enormous amounts of energy to operate, and despite server improvements that have seen decent bumps in efficiency and power in recent years, the industry is still under pressure to clean up emissions as the world gears up towards net zero.

In turn, the company is opening up its flagship data center that will measure in at 475 megawatts, which it calls Susquehanna.

Phase 1 has been complete, meaning that space in the 48-megawatt, 300,000 square foot data center is available to lease.

The total area to be occupied by the 475-megawatt campus is set at 12,000 acres, dubbed to be the first of its kind in the US.

Cumulus promises zero-carbon, low-cost, reliable energy powered by the nearby Talen Energy’s Susquehanna nuclear power generation facility.

The new data centers are set to be directly connected to the 2.5-gigawatt power stations, which Cumulus says will unlock significant value for its customers “without intermediation by legacy electric transmission and distribution utilities.”

What this means is that it will be able to offer “the most attractive power rate in the US”, alongside offering companies the opportunity to reduce their carbon footprints.

Besides the clear benefits for businesses, the company hopes to create “family-sustaining” jobs and offer training in technology, among other things, to nearby Pennsylvania companies. It also hopes to continue expanding such projects in other areas that Talen Energy operates.

While there are clear concerns about the use of nuclear energy, this does at least represent an important step forward in the decarbonization of data centers.

Nvidia announced this week that the latest version of its subscription service, GeForce Now Ultimate, has officially gone live for several cities in the US, rolling out to San Jose, Los Angeles, and Dallas, as well as Frankfurt, Germany. Areas surrounding these cities will also be able to connect to the new Ultimate tier servers.

This version upgrades GeForce Now's premier RTX 3080 tier and rebrands it to Ultimate membership, offering the same benefits of the RTX 3080 tier but upgrading the cloud rig to an RTX 4080 GPU.

The service is powered by the Lovelace GPU architecture and, according to Nvidia, streams at up to 240 FPS with NVIDIA Reflex, up to 4K 120 FPS with support for DLSS 3 and RTX ON, and ultrawide support at up to 3,840 x 1,600p resolution at 120 FPS.

We punched in the numbers and found that if you paid for the Ultimate subscription tier in six-month increments for six years ($99.99, about £85/AU$145), it would cost the same as buying the RTX 4080 graphics card at its current MSRP. This makes it an excellent option for those with a solid internet connection who wants the performance of the current-gen graphics card without having to pay over $1,000 for it.

"After the start of the rollout of the RTX 4080 SuperPODs today, it’ll start rolling out to other regions, with wider release expected throughout Q1," an Nvidia spokesperson told TechRadar. "On our weekly GFN Thursday blog, we’ll be giving updates each week on which regions are getting RTX 4080 performance."

We previously tried out the RTX 3080 tier for our Acer Chromebook 516 GE review and found the performance on one of the best Chromebooks we've tested to be near indistinguishable from actually running a laptop with the best GPU on the market.

And when we went hands-on with the new Ultimate tier for CES 2023, we found that the performance is even better, as it addresses latency issues that have held the subscription service back. Not only would the upgraded servers bring system latency beneath that 60ms threshold, but Nvidia also claims that by incorporating Nvidia Reflex into its server-side processing, it can bring it down as low as 35ms, which is on par with an actual gaming PC running local hardware.

If this turns out to be true, that would be absolutely huge and make an already great service perfect for even hardcore and eventually competitive gaming, maybe even beating out even the best gaming PC you can get for a comparable price.

Listen, I would go to more concerts if it wasn’t for the expense, crowds, and rigamarole involved with getting to the venue. Now, though, after an all-too-brief listening session with the new Apple HomePod 2, maybe I don’t need to go anywhere. The music experience is that good.

For as much as Apple’s brand new HomePod 2 looks like its predecessor, it’s really quite different. After Apple’s surprise launch, I took a look at photos of the new audio components versus the old. Basically, everything is different.

Inside the new HomePod 2 is a high-excursion woofer with a custom amplifier, five tweeters (each paired with a custom amplifier), and a far-field array of four microphones. There’s even a sensor that monitors how the system is running (including its internal temperature) to work out if it can crank up the power even further…

Inside the original HomePod vs the 2nd Gen HomePod.Looks like a cleaner construction and some repositioning of audio components like, for instance, the tweet array. pic.twitter.com/FdsUgqfnkSJanuary 18, 2023

Yes, that’s two fewer tweeters than the original HomePod, but this is new hardware, and the tweeters are all tilted up to avoid any audio getting distorted by reflections from whatever surface the speaker is sitting on.

The mesh fabric is more or less the same as the last HomePod, though it’s made from recyclable materials and is designed to have zero muffling impact on audio.

These are all things you can learn by reading the publicly available spec page on the new audio hardware. But, after my listening experience, I think nothing can entirely prepare you for the HomePod 2’s exquisite audio quality.

Because of the HomePod 2’s design, you could expect 360-degree audio, but that simplistic term is misleading. Based on my listening experience, the HomePod 2 uses its wrap-around audio skills, and the technology backing it, to create an impressive, and immersive, audio landscape.

We started with a song called Everybody by Ingrid Michaelson. I sat maybe eight feet away from the speakers in a high-ceiling, mid-sized living room. It’s a beautiful tune, with Michealeson’s clear, bright, plaintive voice front and center. What I noticed immediately is, from a single HomePod 2, the excellent separation of acoustic instruments and her voice. I could clearly pick out a tambourine, guitar, and drum kit as distinct elements in the air. I wish I could’ve listened to the entire song, Michealeson’s voice is kind of magical.

Next up was the funky Six or Seven More by Cool Sounds. With this, I got a chance to experience the surprisingly powerful, rich, and warm bass. What I noticed is how even with a solid base beat, the music was never muddy. The HomePod 2 gave me a sense of where the original instruments might’ve been during the recording session.

Part of the HomePod 2 musical skills can be credited to the S7 chip (yes, the same as is in the Apple Watch 7) and the application of Advanced Computational Audio. The HomePod 2 is essentially listening to itself and making on-the-fly adjustments to improve audio quality, just like the first one did – but now with more computational power.

The HomePod 2 was adept at delivering aural clarity at everything from 30% to 90% volume. The 90% was loud but not in a bad way. It was a moment where I thought I’d walked into a dive bar to hear a really awesome indie band.

One of the interesting things about the new HomePod 2 is its spatial awareness. As I listened to music from a single and then stereo pair of HomePod 2 devices, I noticed how the sounds often didn’t seem to be coming directly from the HomePods (thanks Spatial Audio!). Some were coming from the left, others from the right, and some (usually, but not always, vocals) from dead center. The most interesting sounds though, were the ones that almost seemed to wash over me; they were bouncing off the back wall (maybe a foot away from the HomePod 2) and then rising and, I’m guessing here, bouncing from the walls to the ceiling to my ears.

The HomePod’s awareness of the space comes courtesy of all those microphones that can read a room in roughly 20 seconds and adjust the audio to make it fit the space. The HomePod 2 even has an accelerometer so it knows when it’s on the move and, with a new song playing in a new space, will quickly readjust.

Listening to Faith by The Weeknd, I could really hear that soundscape of the electronica building in an area behind the HomePods 2 and then slowly moving forward until the whole aural scene washed over me. And of course, the bass was smooth and moving without ever getting in the way of the bright falsetto vocal.

I really enjoyed listening to Boomerang by Yebba. There are so many distinct acoustic instruments that you can pick out, right down to human hands slapping a drum.

A pair of HomePod 2 speakers was even more impressive.

Mystery Lady by Masego and Don Toliver sounded as if it was coming from behind and in front of me. The sound stage was so wide and deep that it didn’t matter where I stood in the room. This is not to say that dead center in front of the speakers was not the optimal aural experience. It was, but I was just as happy to face away or stand in a corner and listen.

A live recording of The Eagles' Hotel California highlighted the speaker’s ability to elevate individual instruments in the intro. I anxiously waited for Don Henley to start singing, not realizing the preamble extends almost a solid minute into the song. [Note from our Audio Editor: "Ah, first time?"] The left and right separation on the song and the HomePod 2 stereo pair’s ability to place the audience's applause and reaction off to the side made it sound and feel like a real live performance.

Taken in the vacuum of this small experience, the new HomePod 2 offers impressive audio chops for a $299 / £299 / AU$479 smart speaker. That’s right, I said smart speaker, because there is so much more than the HomePod 2 can do, but if you want it for nothing more than some of the best music listening to you’ve had in a while, it’s probably worth checking out and the HomePod 2 could be headed to our list of best smart speakers.

Denon is now shipping the AVR-X4800H AV receiver it first announced back in September 2022. That’s great news for home theater fans looking to step up their cinema sound game over what’s delivered by the best dolby Atmos soundbars, and it should also be of interest to gamers seeking a receiver that’s fully compatible with PlayStation 5 and Xbox Series X | S consoles.

A notable feature of the AVR-X4800H, and one that would rank it among the best AV receivers, is its support for 8K 60Hz and 4K 120Hz video pass-through on multiple HDMI 2.1 ports. Some earlier models from Denon and other AV receiver makers provided either a single full-featured HDMI 2.1 input, or even none at all, while promising a full suite of HDMI 2.1 capabilities would be added via a “future firmware update.”

The AVR-X4800H provides 8K and 4K 120Hz compatibility out of the box, and its other gaming features include support for Variable Refresh Rate (VRR), Quick Frame Transport (QFT), and Auto Low Latency Mode (ALLM). There’s also pass-through support for all key high dynamic range formats: Dolby Vision, HDR10+, Dynamic HDR, and HLG.

Denon’s latest receiver is similarly stacked on the audio side, offering up Dolby Atmos, DTS:X, IMAX Enhanced, and Auro 3D sound processing. Built-in Audyssey MultEQ XT32 room correction lets you fine-tune the interaction between your speakers and listening environment for the best sound, and a firmware update that will enable an upgrade to Dirac Live room correction (at extra cost) is promised for the future. Having used Dirac Live and experienced its sound quality benefits, we find that last feature especially compelling.

That’s not all there is to be said about the AVR-X4800H. With 9 onboard 125-watt amplifier channels, it supports Dolby Atmos configurations that use up to four overhead “height effects” speakers. It also has four subwoofer outputs that can be independently controlled. Denon’s wireless HEOS platform is used for streaming, allowing for high-res audio to be conveyed to the receiver over a Wi-Fi connection.

One irony concerning A/V receivers that have been released over the past few years is that many lacked HDMI 2.1 ports with comprehensive features to support the latest generation of gaming consoles, while numerous soundbars offer such support.

Anyone buying Denon’s new receiver will be able to use it well into the future

Models like Denon’s AVR-X4800H correct that situation by letting you connect both a PS5 and an Xbox series X with full pass-through of 4K 120Hz video along with VRR and ALLM support. Oh yes, it also has both 8K video pass-through and upscaling of 4K video to 8K resolution to ensure compatibility with your future 8K TV.

AV receivers like the AVR-X4800H are pricey ($2,499 / £2,000 / AU$3,600) audio components that you’ll want to hang on to for many years – decades, even – so it’s comforting to know that they are available now with a fully up-to-date feature set. Anyone buying Denon’s new receiver will be able to use it well into the future, or at least until virtual reality replaces all other forms of entertainment.

With four independent subwoofer outputs and both Audyssey MultEQ XT32 and Dirac Live support (forthcoming), the AVR-X4800H can be used as the centerpiece of a perfectionist home theater, one with deep, perfectly tuned bass output. Those features are the ones that really make this receiver interesting and different from other options on the market, and we hope to get an opportunity to test it out in the near future.

IT leaders are worried about the security they currently have in place to defend against cyberattacks, but are willing to splash the cash to boost their protections, new research has claimed.

The fourth annual Veeam Data Protection Trends Report surveyed over 4,000 IT leaders and those involved with implementing cybersecurity strategies at various organizations, finding that the adoption of hybrid working has contributed to this feeling of unease.

It noted how new challenges are arising with the increasing shift of digital infrastructure away from premises, as organizations look to cloud document storage and cloud hosting providers, forcing them to raise their IT budgets in response.

In setting goals for the rest of this year, the survey found that IT leaders wanted to prioritize their backup implementations, as well as making sure that Infrastructure as a Service (IaaS) and Software as a Service (SaaS) are just as secure as their datacenter workloads.

As for the organizations themselves, a vast majority felt there was a gap in what they wanted and what their IT teams could deliver. More specifically, there was an 'availability gap' felt by 82% between the requested and actual speeds of recovering stored data.

Nearly 80% of organizations also complained about a 'protection gap', with the amount of potential data loss being too great for the frequency at which data was protected by IT departments.

Such gaps are the reason why over half of the organizations surveyed wished to change their protection for this year, and serve as the justification for increased data protection spending too, expected on average to be up by 8.3% for 85% of organizations, which is considerably higher than in other areas of IT spending.

Judging by recent years, such protection is sorely needed. Cyberattacks, especially ransomware, were the biggest disrupters for organizations' systems every year since 2020, with over 80% professing to have been attacked at least once in the last year, up by a huge 76% from Veeam's previous report.

Data recovery was of the utmost importance to them, as only 55% of stolen data was able to be salvaged. Organizations highlighted “integration of data protection within a cyber preparedness strategy” as the main focus for protection solutions.

A corollary of ransomware attacks, in addition to their initial damage, is the drain they have on the resources and budgets of IT teams, forcing them to postpone upgrades to the digital landscape of the organization and focus on recovery efforts and the fallout from such attacks instead.

Containers such as Kubernettes are also growing in popularity - just over half of respondents are running them, and 40% said they planned to. But the report lamented the fact that the "same kinds of data protection strategy disparities as seen in early adopters of SaaS five years ago or virtualization 15 years ago" are being repeated.

The issue is that only the storage is being protected, whilst an overarching approach to protecting workloads is being neglected. The report noted this is typical behavior following the adoption of new platforms.

"Legacy backup approaches won’t address modern workloads - from IaaS and SaaS to containers - and result in an unreliable and slow recovery for the business when it’s needed most", said Veeam CTO Danny Allan.

"This is what’s focusing the minds of IT leaders as they consider their cyber resiliency plan. They need Modern Data Protection."

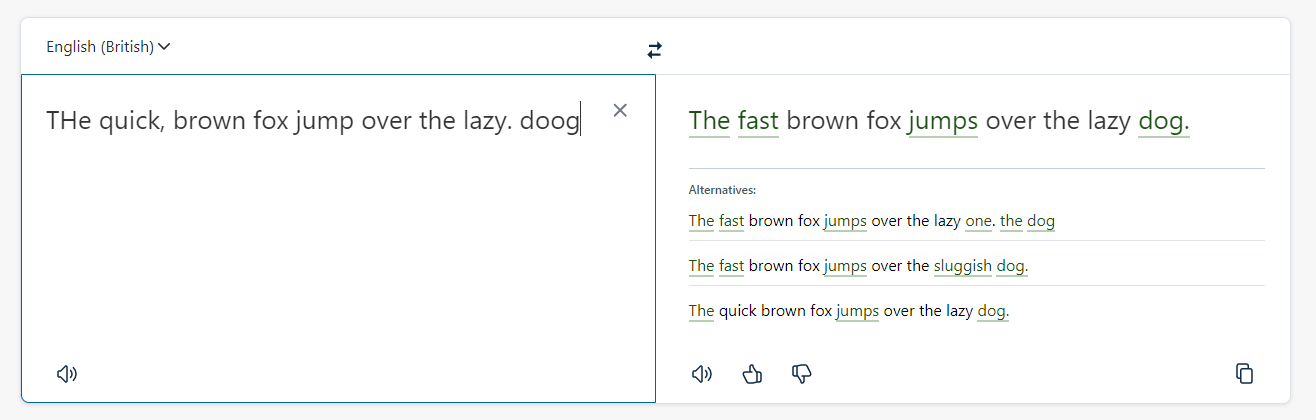

Artificial intelligence is being used to cast its all-seeing eye over the written word with DeepL’s latest free writing app Write.

Pitched as a rival to Grammarly, the AI writing tool is able to correct spelling and grammar mistakes, rephrase words and sentences, and generally make your writing clearer.

Developer DeepL, who first made waves with its AI-driven translation software, said the tool uses “neural network technology that captures the context and nuances of the original text to provide rephrasing suggestions and alternative word choices.”

Originating as an idea to help its multilingual user-base to check the accuracy of their translations, the idea behind Write is to grant users control over their writing.

Unlike ChatGPT’s essay-generating content, this AI is effectively a smart proof-reader, although the company claimed that by serving up recommended changes across phrasing, tone, style, and word choice, writers can “retain their authentic voice” and creativity.

We gave Write a quick test drive. The interface is clean, with just two text fields and an option to switch languages. British-English, American-English, and German are supported at launch.

Text is pasted in the left field and the results appear to the right. Suggestions are highlighted in that familiar word processor way. Clicking on these in-body links serves up a few more possibilities, too.

> Adobe is almost definitely using your content to train AI

> Best free office software: Business alternatives to Word, PowerPoint and Excel

> What is AI capable of, really?

Speed was excellent - less than a second to check a single sentence; a few seconds to run its A-eye over a longer piece (although after 2000 characters, you’ll get a prompt to purchase DeepL Pro).

It’s not perfect, though. Like similar writing and writing enhancement apps, suggested changes can feel flat, unemotional, process-driven. As with any AI content, human refinement is usually necessarily and always desirable.

You can give Write a go by clicking here.

I am a passionate blogger, youtuber and digital marketer.